На одном из серверов в логе ошибок веб-сервера Nginx появились сообщения вида

2017/11/22 08:21:02 [crit] 29098#29098: *174583882 open() "/var/www/public/blackfriday/img/tabs/img4.png" failed (24: Too many open files), client: 176.113.144.142, server: example.com, request: "GET /blackfriday/img/tabs/img4.png HTTP/2.0", host: "example.com"

Давайте разберемся как исправить данную ошибку в операционной системе Centos 7!

Смотрим текущие soft/hard лимиты файловых дескрипторов и открытых файлов для основного (master) процесса Nginx:

cat /proc/$(cat /var/run/nginx.pid)/limits|grep open.files

Max open files 1024 4096 files

и для дочерних (worker) процессов:

ps --ppid $(cat /var/run/nginx.pid) -o %p|sed '1d'|xargs -I{} cat /proc/{}/limits|grep open.files

Max open files 1024 4096 files

Max open files 1024 4096 files

Max open files 1024 4096 files

Открываем на редактирование конфигурационный файл /etc/security/limits.conf и вставляем в него следующие строки:

...

nginx soft nofile 10000

nginx hard nofile 30000

В конфигурациооный файл nginx.conf добавляем следующую строку:

...

worker_rlimit_nofile 30000;

...

Проверим конфигурацию веб-сервера на предмет ошибок и перечитаем конфиг:

nginx -t && nginx -s reload

Проверим новые лимиты, установленные для дочерних процессов веб-сервера Nginx:

ps --ppid $(cat /var/run/nginx.pid) -o %p|sed '1d'|xargs -I{} cat /proc/{}/limits|grep open.files

Max open files 10000 30000 files

Max open files 10000 30000 files

Max open files 10000 30000 files

Новые лимиты могут примениться не ко всем дочерним процессам веб-сервера, в таком случае необходимо перезапустить nginx с помощью команды:

service nginx restart

2015/09/03 19:22:12 [crit] 3427#0: accept4() failed (24: Too many open files)

Im thinking now that they’re attacking via the direct IP, but the redirect isnt enough to stop them from making me reach my open file limit. Is there anything I can do to stop this?

EDIT: I don’t believe they’re attacking the IP directly because the software I have running on the site still detected the massive amounts of traffic that the attacker caused.

87.135.112.116 - - [05/Sep/2015:14:47:53 +0200] "GET /index.php HTTP/1.0" 200 15767 "-" "Mozilla/4.0 (compatible; MSIE 7.0; Windows NT 6.1; WOW64; Trident/4.0; SLCC2; .NET CLR 2.0.50727; .NET CLR 3.5.30729; .NET CLR 3.0.30729; .NET4.0C; .NET4.0E; Media Center PC 6.0)"

113.22.35.98 - - [05/Sep/2015:14:47:53 +0200] "GET /index.php HTTP/1.0" 200 15767 "-" "Opera/9.80 (X11; Linux i686; Ubuntu/14.10) Presto/2.12.388 Version/12.16"

2.50.56.236 - - [05/Sep/2015:14:47:54 +0200] "GET /index.php HTTP/1.0" 200 15767 "-" "Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/43.0.2357.81 Safari/537.36"

93.170.133.26 - - [05/Sep/2015:14:47:54 +0200] "GET /index.php HTTP/1.0" 200 15767 "-" "Mozilla/5.0 (Windows NT 6.3; rv:36.0) Gecko/20100101 Firefox/36.0"

I am a Linux and Nginx novice, but have learnt enough to get it installed and running and working as a simple reverse proxy for two internal webservers. This has been running fine for months, but I recently started getting 500 errors.

Here is the recent output of /var/log/nginx/error.log

(I have replaced our company name with «companyname.com» and replaced our public WAN IP address with

2020/02/10 15:17:49 [alert] 1069#1069: *1011 socket() failed (24: Too many open files) while connecting to upstream, client: 10.10.10.1, server: web1.companyname.com, request: "GET / HTTP/1.0", upstream: "https://<WANIP>:443/", host: "web1.companyname.com"

2020/02/10 15:21:41 [alert] 1069#1069: *2022 socket() failed (24: Too many open files) while connecting to upstream, client: 10.10.10.1, server: web2.companyname.com, request: "GET / HTTP/1.0", upstream: "https://<WANIP>:443/", host: "web2.companyname.com"

2020/02/10 15:33:28 [alert] 1084#1084: *19987 socket() failed (24: Too many open files) while connecting to upstream, client: 10.10.10.1, server: web2.companyname.com, request: "GET / HTTP/1.0", upstream: "https://<WANIP>:443/", host: "web2.companyname.com"

2020/02/10 15:34:16 [alert] 1084#1084: *39974 socket() failed (24: Too many open files) while connecting to upstream, client: 10.10.10.1, server: web1.companyname.com, request: "GET / HTTP/1.0", upstream: "https://<WANIP>:443/", host: "web1.companyname.com"

2020/02/10 15:50:30 [error] 1086#1086: *1 client intended to send too large body: 4294967295 bytes, client: 176.58.124.134, server: london.companyname.com, request: "GET /msdn.cpp HTTP/1.1", host: "<WANIP>"

2020/02/10 16:32:56 [alert] 1086#1086: *19989 socket() failed (24: Too many open files) while connecting to upstream, client: 10.10.10.1, server: web1.companyname.com, request: "GET / HTTP/1.0", upstream: "https://<WANIP>:443/", host: "web1.companyname.com"

nginx soft nofile 10000

nginx hard nofile 30000

fs.file-max=70000

Any tips, suggestions are grealy appreciated. Thanks.

The server is Ubuntu 13.04 (GNU/Linux 3.9.3-x86_64-linode33 x86_64).

nginx is nginx/1.2.6.

I’ve been working on this for an several hours now, so here’s what I’m getting and here’s what I’ve done.

tail -f /usr/local/nginx/logs/error.log

2013/06/18 21:35:03 [crit] 3427#0: accept4() failed (24: Too many open files)

2013/06/18 21:35:04 [crit] 3427#0: accept4() failed (24: Too many open files)

2013/06/18 21:35:04 [crit] 3427#0: accept4() failed (24: Too many open files)

2013/06/18 21:35:04 [crit] 3427#0: accept4() failed (24: Too many open files)

2013/06/18 21:35:04 [crit] 3427#0: accept4() failed (24: Too many open files)

2013/06/18 21:35:04 [crit] 3427#0: accept4() failed (24: Too many open files)

2013/06/18 21:35:04 [crit] 3427#0: accept4() failed (24: Too many open files)

2013/06/18 21:35:04 [crit] 3427#0: accept4() failed (24: Too many open files)

2013/06/18 21:35:04 [crit] 3427#0: accept4() failed (24: Too many open files)

2013/06/18 21:35:04 [crit] 3427#0: accept4() failed (24: Too many open files)

2013/06/18 21:35:04 [crit] 3427#0: accept4() failed (24: Too many open files)

2013/06/18 21:35:04 [crit] 3427#0: accept4() failed (24: Too many open files)

2013/06/18 21:35:05 [crit] 3426#0: accept4() failed (24: Too many open files)

geuis@localhost:~$ ps aux | grep nginx

root 3422 0.0 0.0 39292 380 ? Ss 21:30 0:00 nginx: master process /usr/local/nginx/sbin/nginx

nobody 3423 3.7 18.8 238128 190848 ? S 21:30 0:13 nginx: worker process

nobody 3424 3.8 19.0 236972 192336 ? S 21:30 0:13 nginx: worker process

nobody 3426 3.6 19.0 235492 192192 ? S 21:30 0:13 nginx: worker process

nobody 3427 3.7 19.0 236228 192432 ? S 21:30 0:13 nginx: worker process

nobody 3428 0.0 0.0 39444 468 ? S 21:30 0:00 nginx: cache manager process

Modified soft/hard limits in /etc/security/limits.conf (settings from the end of the file)

root soft nofile 65536

root hard nofile 65536

www-data soft nofile 65536

www-data hard nofile 65536

nobody soft nofile 65536

nobody hard nofile 65536

A reading of the max files

cat /proc/sys/fs/file-max

500000

And in /etc/pam.d/common-session:

session required pam_limits.so

With this added and the server restarted for good measure, for nginx I count the soft/hard limits by getting the parent process’s PID and:

cat /proc/<PID>/limits

Limit Soft Limit Hard Limit Units

Max open files 1024 4096 files

The parent process runs as ‘root’ and the 4 workers run as ‘nobody’.

root 2765 0.0 0.0 39292 388 ? Ss 00:03 0:00 nginx: master process /usr/local/nginx/sbin/nginx

nobody 2766 3.3 17.8 235336 180564 ? S 00:03 0:21 nginx: worker process

nobody 2767 3.3 17.9 235432 181776 ? S 00:03 0:21 nginx: worker process

nobody 2769 3.4 17.9 236096 181524 ? S 00:03 0:21 nginx: worker process

nobody 2770 3.3 18.3 235288 185456 ? S 00:03 0:21 nginx: worker process

nobody 2771 0.0 0.0 39444 684 ? S 00:03 0:00 nginx: cache manager process

I’ve tried everything I know how to do and have been able to get from Google. I cannot get the file limits for nginx to increase.

Если вы работали с программами, которым приходится обрабатывать очень большое количество файловых дескрипторов, например с распределенными базами данных, такими, как Elasticsearch, то вы, наверняка, сталкивались с ошибкой «too many open files в Linux».

В этой небольшой статье мы разберемся, что означает эта ошибка, а также как её исправить в различных ситуациях.

Дословно эта ошибка означает, что программа открыла слишком много файлов и больше ей открывать нельзя. В Linux установлены жёсткие ограничения на количество открываемых файлов для каждого процесса и пользователя.

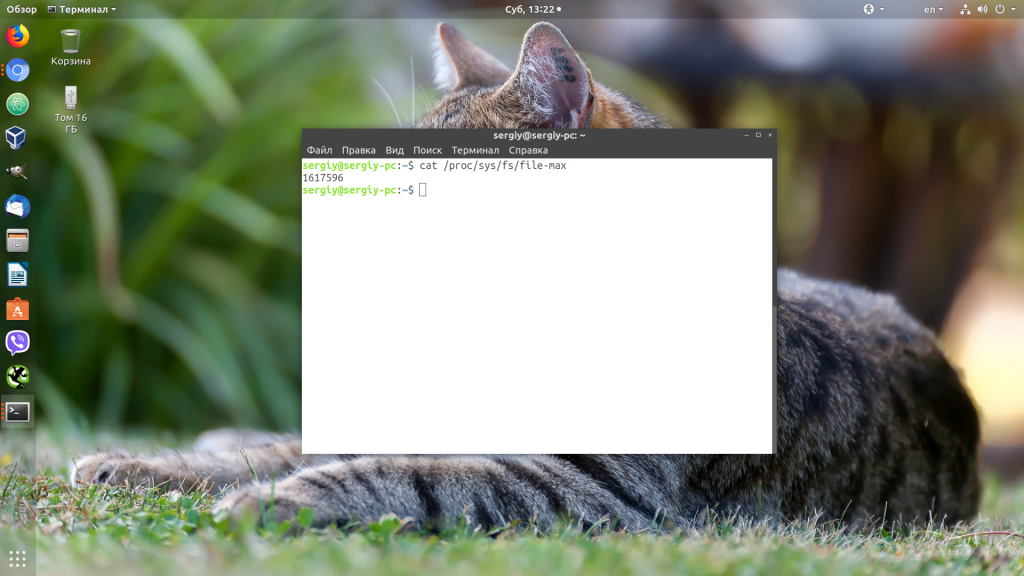

Посмотреть, сколько файлов можно открыть в вашей файловой системе, можно, выполнив команду:

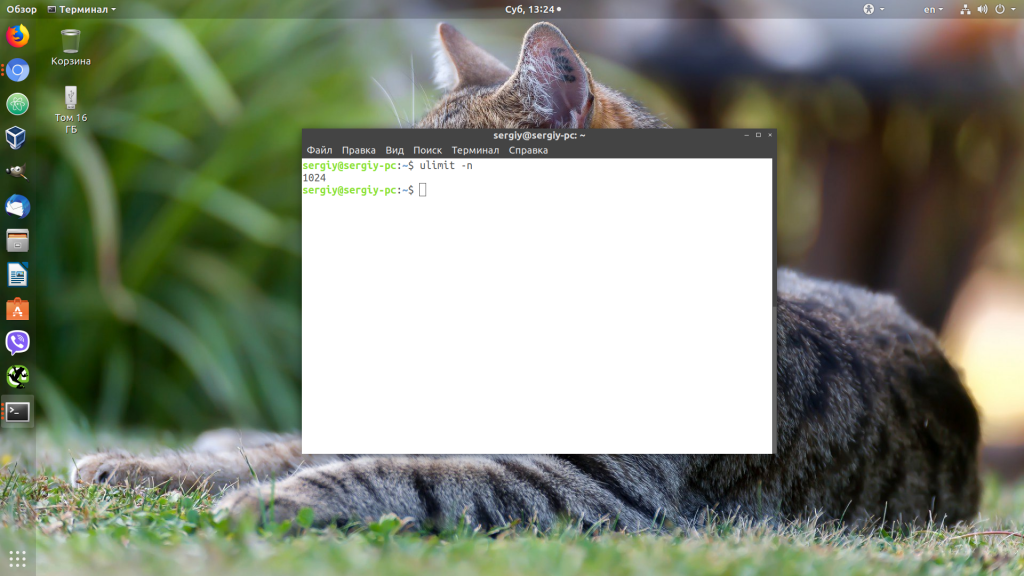

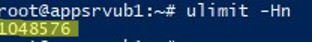

Посмотреть текущие ограничения количества открытых файлов для пользователя можно командой:

Утилита ulimit возвращает два вида ограничений — hard и soft. Ограничение soft вы можете менять в любую сторону, пока оно не превышает hard. Ограничение hard можно менять только в меньшую сторону от имени обычного пользователя. От имени суперпользователя можно менять оба вида ограничений так, как нужно. По умолчанию отображаются soft-ограничения:

Чтобы вывести hard, используйте опцию -H:

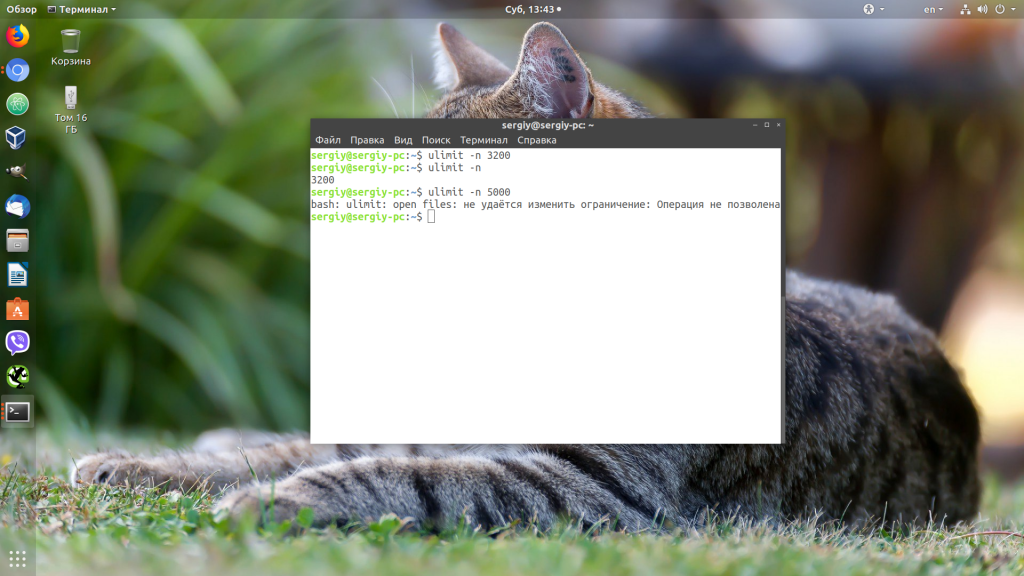

Вы можете изменить ограничение, просто передав в ulimit новое значение:

ulimit -n 3000

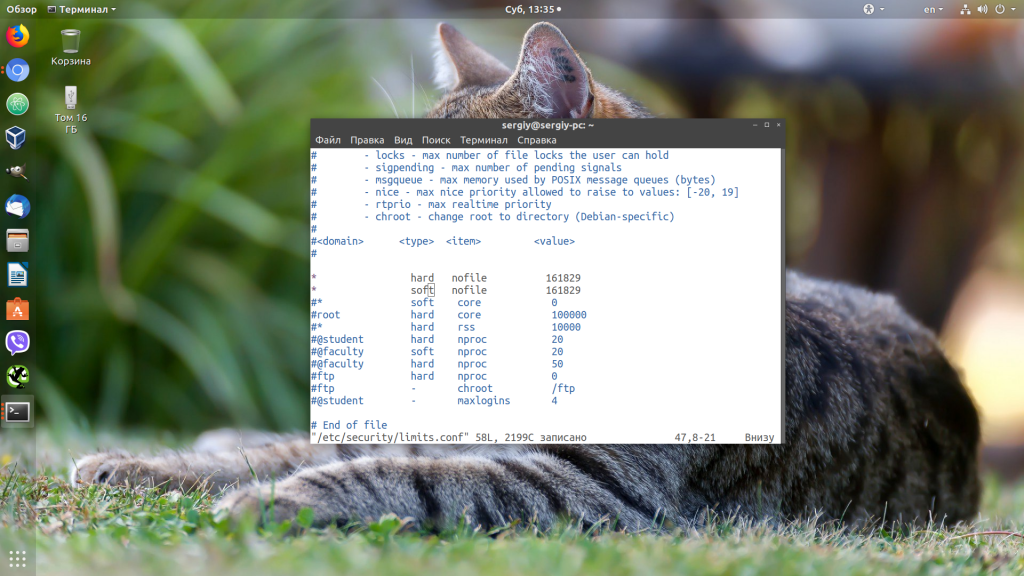

Но поскольку hard-ограничение составляет 4000, то установить лимит больше этого значения вы не сможете. Чтобы изменить настройки ограничений для пользователя на постоянной основе, нужно настроить файл /etc/security/limits.conf. Синтаксис у него такой:

Вместо имени пользователя можно использовать звездочку, чтобы изменения применялись ко всем пользователям в системе. Тип ограничения может быть soft или hard. Название — в нашем случае нужно nofile. И последний пункт — нужное значение. Установим максимум — 1617596.

sudo vi /etc/security/limits.conf

* hard nofile 1617596

* soft nofile 1617596

Нужно установить значение для soft и hard параметра, если вы хотите, чтобы изменения вступили в силу. Также убедитесь, что в файле /etc/pam.d/common-session есть такая строчка:

session required pam_limits.so

Если её нет, добавьте в конец. Она нужна, чтобы ваши ограничения загружались при авторизации пользователя.

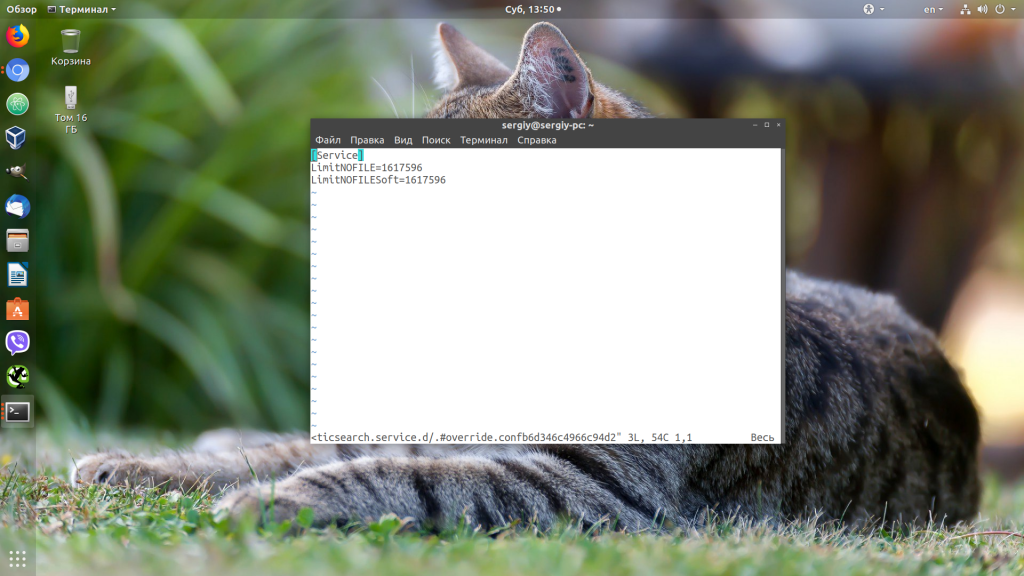

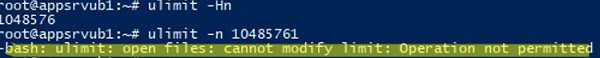

Если вам нужно настроить ограничения только для определенного сервиса, например Apache или Elasticsearch, то для этого не обязательно менять все настройки в системе. Вы можете сделать это с помощью systemctl. Просто выполните:

sudo systemctl edit имя_сервиса

И добавьте в открывшейся файл такие строки:

Здесь мы устанавливаем максимально возможное ограничение как для hard- так и для soft-параметра. Дальше нужно закрыть этот файл и обновить конфигурацию сервисов:

sudo systemctl daemon-reload

Затем перезагрузить нужный сервис:

sudo systemctl restart имя_сервиса

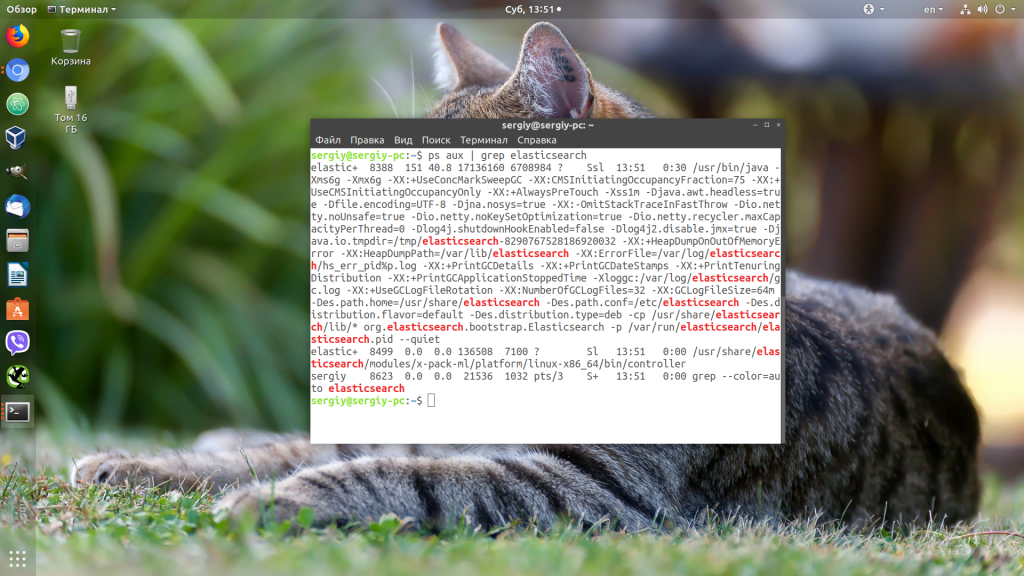

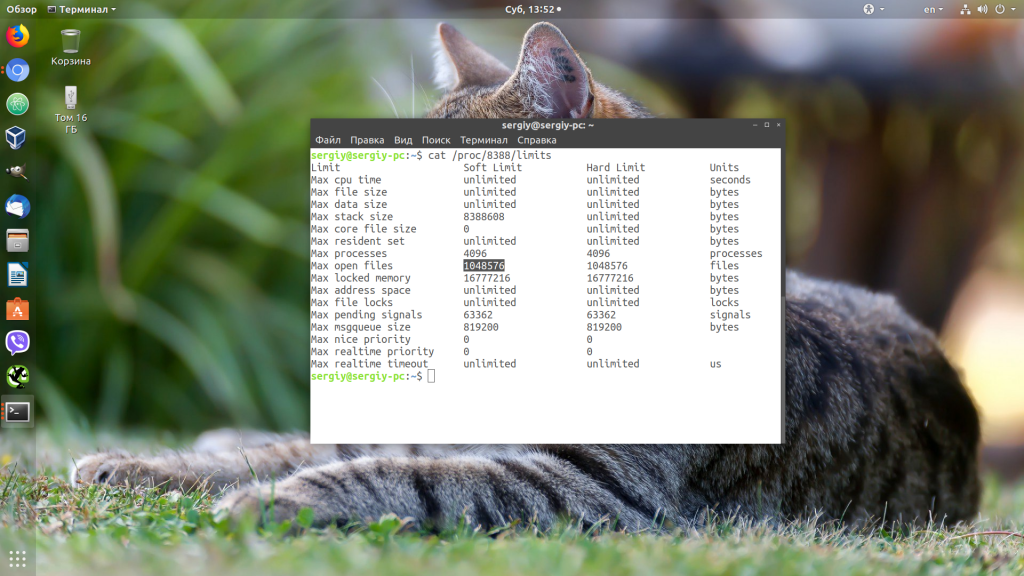

Убедится, что для вашего сервиса применились нужные ограничения, можно, открыв файл по пути /proc/pid_сервиса/limits. Сначала смотрим PID нужного нам сервиса:

Затем смотрим информацию:

Первая настройка VPS — не спрашивайте здесь, если я не проверил должную осмотрительность и не предоставил контекст.

На моем удаленном VPS через терминал почти все команды, которые я выполняю, заканчиваются сообщением об ошибке : слишком много открытых файлов , и мне нужна ваша помощь, чтобы двигаться дальше.

Я запускаю: CentOS Linux, выпуск 7.6.1810 (Core) на машине с 1 ядром ЦП и 2048 МБ ОЗУ. Он был настроен со стеком LEMP Nginx 1.16.1, PHP-FPM 7.3.9, MariaDb 10.4.8 , предназначенным для простого сайта wordpress.

- Google и форум поиск.

- Применены следующие настройки (вручную перезапускать VPS вручную через панель управления каждый раз):

Общесистемные настройки в /etc/security/limits.conf :

nginx soft nofile 1024

nginx hard nofile 65536

root hard nofile 65536

root soft nofile 1024

настройки пределов памяти и загружает в /etc/php.ini :

memory_limit = 256M

file_uploads = On

upload_max_filesize = 128M

max_execution_time = 600

max_input_time = 600

max_input_vars = 3000

Настройки rlimit PHP в /etc/php-fpm.d/www.conf :

rlimit_files = 65535

Установка ограничений NGINX (и другие настройки) в nginx.conf :

user nginx;

worker_processes 1;

error_log /var/log/nginx/error.log warn;

pid /var/run/nginx.pid;

events {

worker_connections 10000;

}

worker_rlimit_nofile 100000;

http {

include /etc/nginx/mime.types;

default_type application/octet-stream;

log_format main '$remote_addr - $remote_user [$time_local] "$request" '

'$status $body_bytes_sent "$http_referer" '

'"$http_user_agent" "$http_x_forwarded_for"';

access_log /var/log/nginx/access.log main;

sendfile on;

#tcp_nopush on;

keepalive_timeout 65;

client_body_buffer_size 128k;

client_header_buffer_size 10k;

client_max_body_size 100m;

large_client_header_buffers 4 256k;

#gzip on;

include /etc/nginx/conf.d/*.conf;

include /etc/nginx/sites-enabled/*.conf;

server_names_hash_bucket_size 64;

}

Вот результат cat / proc / sys / fs / file-nr :

45216 0 6520154

root 928 0.0 0.0 46440 1192 ? Ss 00:25 0:00 nginx: master process /usr/sbin/nginx -c /etc/nginx/nginx.conf

nginx 929 0.0 0.2 50880 6028 ? S 00:25 0:00 nginx: worker process

nginx 9973 0.0 0.1 171576 4048 ? S 04:28 0:00 php-fpm: pool www

nginx 9974 0.0 0.1 171576 4048 ? S 04:28 0:00 php-fpm: pool www

nginx 9975 0.0 0.1 171576 4048 ? S 04:28 0:00 php-fpm: pool www

nginx 9976 0.0 0.1 171576 4048 ? S 04:28 0:00 php-fpm: pool www

nginx 9977 0.0 0.1 171576 4052 ? S 04:28 0:00 php-fpm: pool www

Переключение пользователя на nginx с помощью su - nginx и проверка пределов с помощью:

ulimit -Sn возвращает 1024

ulimit -Hn возвращает 65536

Надеюсь, вы поможете направить меня в правильном направлении, чтобы решить проблему слишком большого количества файлов!

ИЗМЕНИТЬ

— следующая команда показывает дополнительную информацию:

service nginx restart

Redirecting to /bin/systemctl restart nginx.service

Error: Too many open files

Job for nginx.service failed because a configured resource limit was exceeded. See "systemctl status nginx.service" and "journalctl -xe" for details.

[root@pars ~]# systemctl status nginx.service

● nginx.service - nginx - high performance web server

Loaded: loaded (/usr/lib/systemd/system/nginx.service; enabled; vendor preset: disabled)

Drop-In: /usr/lib/systemd/system/nginx.service.d

└─worker_files_limit.conf

Active: failed (Result: resources) since Fri 2019-09-13 05:32:23 CEST; 14s ago

Docs: http://nginx.org/en/docs/

Process: 1113 ExecStop=/bin/kill -s TERM $MAINPID (code=exited, status=0/SUCCESS)

Process: 1125 ExecStart=/usr/sbin/nginx -c /etc/nginx/nginx.conf (code=exited, status=0/SUCCESS)

Main PID: 870 (code=exited, status=0/SUCCESS)

CGroup: /system.slice/virtualizor.service/system.slice/nginx.service

Sep 13 05:32:22 pars.work systemd[1]: Starting nginx - high performance web server...

Sep 13 05:32:22 pars.work systemd[1]: PID file /var/run/nginx.pid not readable (yet?) after start.

Sep 13 05:32:22 pars.work systemd[1]: Failed to set a watch for nginx.service's PID file /var/run/nginx.pid: Too many open files

Sep 13 05:32:23 pars.work systemd[1]: Failed to kill control group: Input/output error

Sep 13 05:32:23 pars.work systemd[1]: Failed to kill control group: Input/output error

Sep 13 05:32:23 pars.work systemd[1]: Failed to start nginx - high performance web server.

Sep 13 05:32:23 pars.work systemd[1]: Unit nginx.service entered failed state.

Sep 13 05:32:23 pars.work systemd[1]: nginx.service failed.

- ‘Too Many Open Files’ Error and Open Files Limit in Linux

- File-max vs ulimit

- fs.file-max

- fs.file-nr

- ulimit

- In-depth analysis

- How to Increase the Max Open Files Limit in Linux?

- Solution

- Changing the ulimit default for docker daemon

- /etc/security/limits.conf

- Modifying the calculation

- Modify the fallback

- Not playing with you: configuration items

- Change the Open File Limit for the Current User Session

- How to Set Max Open Files for Nginx and Apache?

- Preliminary analysis

- Increase the Maximum Number of Open File Descriptors per Service

- Problem phenomenon

- Выводы

- Об авторе

‘Too Many Open Files’ Error and Open Files Limit in Linux

First of all, let’s see where the ‘too many open files’ errors appear. Most often it occurs on the servers with an installed Nginx /httpd web server or a database server running MySQL/MariaDB/PostgreSQL when reading a large number of log files. For example, when an Nginx exceeds the open files limit, you will see an error:

socket () failed (29: Too many open files) while connecting to upstream

HTTP: Accept error: accept tcp [::]:<port_number>: accept4: too many open files.

In Python apps:

OSError: [Errno 24] Too many open files.

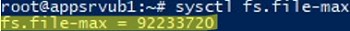

Using this command, you can get the maximum number of file descriptors your system can open:

# cat /proc/sys/fs/file-max

To find out how many files are currently open, run:

# cat /proc/sys/fs/file-nr

7122 123 92312720

- 7122 — total number of open files

- 123– number of open files that are not currently in use

- 92312720– maximum number of files allowed to be opened

In Linux, you can configure max open files limits at several levels:

- OS kernel

- Service

- User

To display the current limit on the number of open files in the Linux kernel, run:

# sysctl fs.file-max

fs.file-max = 92233720

# ulimit -n

By default, the number of files for one process of this is limited to 1024.

# ulimit –u

5041

To view the current limits, use the ulimit command with the -S (soft) or -H (hard) option and the -n (the maximum number of open file descriptors) option.

To display the soft limit, run this command:

# ulimit –Sn

To display the hard limit value:

# ulimit -Hn

File-max vs ulimit

fs.file-max

First, there is a global range of the number of files that can be opened on linux.

- This value is related to OS, hardware resources, and may be different for different systems. For example, the value above is on a physical server, but on a virtual machine it is only 808539; the value may also be different for the same hardware and different os

- This value expresses the max value of open files at the system level, and has nothing to do with a user or a session

- This value is changeable! If you run some database or web server, the default file-max is likely to be insufficient due to the need to open a large number of files, which can then be modified by sysctl.

Or modify sysctl.conf to add fs.file-max=500000 and sysctl -p to take effect.

fs.file-nr

ulimit

What about ulimit, is it system level? No, this is a classic misconception. In fact, ulimit limits the resources that a process can use.

If it’s on the host, you can modify ulimit by modifying /etc/security/limits.conf.

In-depth analysis

Back to the original question.

Our nginx ingress controller is running in a container, so the number of files that the nginx worker can open depends on the ulimit in the container.

As we can see from the previous logs, the maximum number of files that can be opened by the ulimit in the container is 65535, the nginx worker is a multi-threaded model, and the number of threads depends on the number of cpu cores, so the number of files that can be opened by each nginx worker is 65535/(number of cpu cores). The maximum number of files that each nginx worker can open is 65535/56 = 1170.

So where does the maximum number of open files per nginx worker of 1024 come from?

Let’s look at how it is calculated in ingress-nginx.

This means that 1024 will be deducted for the thread itself (after all, it has to open some .so .a etc. files) and the rest will be used to process web requests; if it is less than 1024, it will be rounded up to 1024, so we saw earlier that the maximum number of open files per nginx worker is 1024.

How to Increase the Max Open Files Limit in Linux?

username restriction_type restriction_name value

apache hard nofile 978160 apache soft nofile 978160

* hard nofile 97816 * soft nofile 97816

$ sudo -u nginx bash -c 'ulimit -n'

1024

nginx hard nofile 50000 nginx soft nofile 50000

On older Linux kernels, the value of fs.file-max may be set to 10000. So check this value and increase it so that it is greater than the number in limits.conf:

# sysctl -w fs.file-max=500000

fs.file-max = 500000

And apply it:

# sysctl -p

Check that the file /etc/pam.d/common-session (Debian/Ubuntu) or /etc/pam.d/login (CentOS/RedHat/Fedora) contains the line:

session required pam_limits.so

After making any changes, re-open the console, and check the max_open_files value:

# ulimit -n

50000

Solution

Now the problem is clear, it is the nginx worker can open the maximum number of files, how to solve it?

Can #### ulimit do it?

The first solution that comes to mind is to modify ulimit.

However, since nginx for the ingress controller is running in a container, we need to modify the limit in the container.

As mentioned before, ulimit represents the limits of the “process”, what about the ulimit of the child processes of the process? We’ll go back to bash to see if the container is the same as before.

As you can see, the ulimit of the child process (the new bash) and the ulimit of the parent process (i.e. the docker run up bash), are the same.

Therefore, we can find a way to pass this parameter to the nginx worker, so that we can control the maximum number of open files for the nginx worker.

This method does not work.

Changing the ulimit default for docker daemon

The ulimit value of processes in containers is inherited from docker daemon, so we can modify the configuration of docker daemon to set the default ulimit so that we can control the ulimit value of containers running on the ingress machine (including the ingress container itself). Modify /etc/docker/daemon.json.

This way can be passed, but it is slightly tricky, so don’t use it for now.

/etc/security/limits.conf

Since you can modify ulimit on the host through /etc/security/limits.conf, can’t you do the same in the container?

Let’s make a new image and overwrite the /etc/security/limits.conf in the original image.

Make a new image, named xxx, without setting --ulimit After booting, view.

Unfortunately, docker doesn’t use the /etc/security/limits.conf file, so it doesn’t work either.

Modifying the calculation

This issue was actually encountered by someone in 2018 and submitted PR 2050.

The author’s idea is that since I can’t set ulimit, I can solve the problem by setting fs.file-max in the container (e.g. by adding init Container) and modifying the way ingress nginx is calculated, wouldn’t that solve the problem?

Set in init Container.

The change in ingress nginx is also very simple, the previous calculation is not modified, just the implementation of sysctlFSFileMax is changed from getting ulimit to fs/file-max (obviously, the original comment was wrong, because it was actually ulimit, process-level, not fs.file-max).

Even though the ingress is in a container, since we have a whole physical machine that is used as the ingress, we don’t need the init Container either, and it works just fine as calculated above.

The way is clear!

Modify the fallback

However, this PR predates the version of ingress nginx I use, and in my version, ulimit is still used (of course the comments are still wrong).

It should be said that it is reasonable to use ulimit, because indeed nginx is in a container and it is not reasonable to allocate all host resources to the container.

However, docker’s isolation is not good, the default nginx ingress controller calculates nginx workers by taking the runtime.NumCPU(), so that even if the nginx container is assigned a 4-core CPU, the number of workers obtained is still the host!

But you can’t say that the current calculation is wrong and shouldn’t be divided by the number of CPUs, because ulimit is indeed “process” level, and nginx workers are indeed threads.

OK, according to the above understanding, as long as you can set the ulimit of nginx container, everything will be fine, however, kubernetes does not support it.

Not playing with you: configuration items

It finally cleared up.

Change the Open File Limit for the Current User Session

# ulimit -n 3000

If you specify a value here greater than that specified in the hard limit, an error will appear:

-bash: ulimit: open files: cannot modify limit: Operation not permitted

After closing the session and opening a new one, the limits will return to the initial values specified in /etc/security/limits.conf.

In this article, we have learned how to solve the issue when the value of open file descriptors limit in Linux is too small, and looked at several options for changing these limits on the server.

How to Set Max Open Files for Nginx and Apache?

worker_rlimit_nofile 16000

Then restart Nginx.

# nginx -t && service nginx -s reload

For Apache, you need to create a directory:

# mkdir /lib/systemd/system/httpd.service.d/

Then create the limit_nofile.conf file:

# nano /lib/systemd/system/httpd.service.d/limit_nofile.conf

Add to it:

[Service] LimitNOFILE=16000

Don’t forget to restart the httpd service.

Preliminary analysis

As you can see, the nginx worker can open a maximum of 1024 files, but the current process caught has opened 910, in the traffic is larger, there may be a larger number of open files problem. Also note the ulimit value, which will be mentioned later.

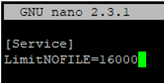

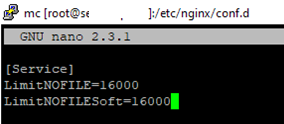

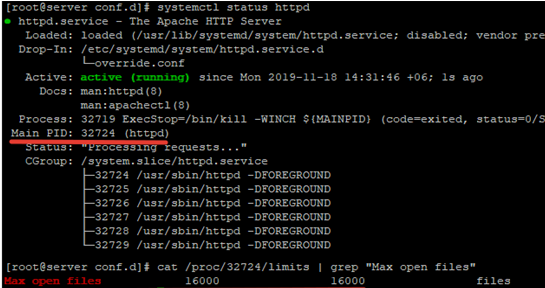

Increase the Maximum Number of Open File Descriptors per Service

You can increase the max open file descriptors for a specific service, rather than for the entire operating system. Let’s take apache as an example. Open the service settings using systemctl:

# systemctl edit httpd.service

Add the limits you want, e.g.:

[Service] LimitNOFILE=16000 LimitNOFILESoft=16000

After making the changes, update the service configuration, and restart it:

# systemctl daemon-reload

# systemctl restart httpd.service

To check if the values have changed, get the service PID:

# systemctl status httpd.service

For example, the service PID is 3724:

The value must be 16000.

Thus, you have changed Max open files value for a specific service.

Problem phenomenon

Our kubernetes ingress controller is using ingress-nginx from kubernetes and we recently encountered a » Too many open files» problem.

Выводы

В этой небольшой статье мы разобрали, что делать, если возникает ошибка «слишком много открытых файлов Linux», а также как изменять ограничения на количество открытых файлов для пользователя и процесса в Linux.

Обнаружили ошибку в тексте? Сообщите мне об этом. Выделите текст с ошибкой и нажмите Ctrl+Enter.

Об авторе

![]()

Основатель и администратор сайта losst.ru, увлекаюсь открытым программным обеспечением и операционной системой Linux. В качестве основной ОС сейчас использую Ubuntu. Кроме Linux, интересуюсь всем, что связано с информационными технологиями и современной наукой.